OLMo 3

Model reviews for builders covering open/local and frontier models — with honest takes on what actually works and sovereignty vs capability tradeoffs.

OLMo 3 is the most radically open language model ever released. Allen AI didn’t just drop weights — they released everything: the full 9.3 trillion token training corpus (Dolma 3), all intermediate checkpoints, the complete training code, and the post-training datasets (Dolci). You can trace any behavior back to the exact data and decisions that produced it.

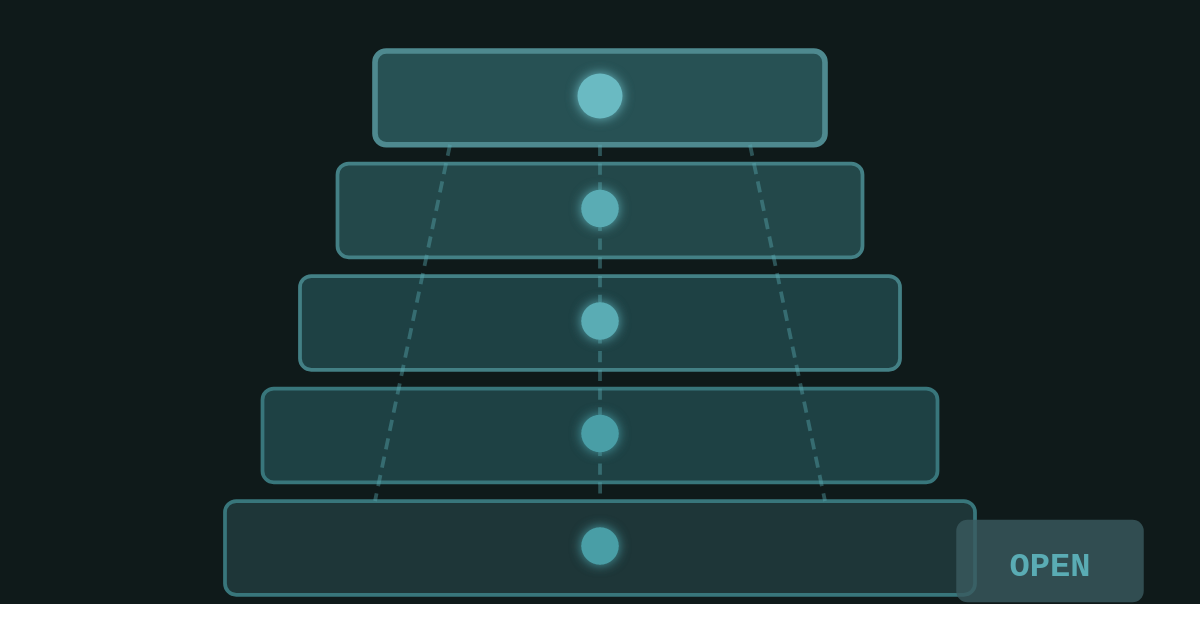

The family comes in 7B and 32B sizes across four variants:

OLMo 3-Base — Foundation for fine-tuning and research

OLMo 3-Think — Reasoning-focused with explicit chain-of-thought

OLMo 3-Instruct — Chat, tool use, multi-turn dialogue

OLMo 3-RL Zero — RL research pathway with verifiable rewards

The flagship is OLMo 3-Think 32B — the strongest fully open thinking model available. It rivals Qwen 3 32B on reasoning benchmarks while being trained on 6x fewer tokens. That’s not marketing — that’s compute efficiency that matters for reproducibility.

Architecture is dense transformer with 65K context length. Apache 2.0 license — no commercial restrictions, no usage limitations. Released November 2025, with OLMo 3.1 updates in December adding improved reasoning and instruction-following.

Details

Strengths:

Total transparency — Not just open weights, but open data, open training, open checkpoints. You can actually understand why the model behaves the way it does.

Compute efficient — Matches Qwen 3 performance with 6x fewer training tokens. Allen AI is proving you don’t need trillion-dollar compute to compete.

Apache 2.0 — No Llama-style restrictions, no Qwen business limitations. Fork it, merge it, sell it — your call.

Research-grade — Multiple training pathways documented. The RL Zero checkpoints are a gift to alignment researchers.

Weaknesses:

Not quite SOTA — On broad knowledge (MMLU) and science-heavy benchmarks (GPQA), Qwen 3 32B still edges ahead. OLMo 3 is close, not leading.

32B is heavy — Dense architecture means no MoE efficiency tricks. You need real hardware to run the flagship locally.

Smaller ecosystem — Less community tooling, fewer fine-tunes, less momentum than Llama or Qwen communities.

The feel:

OLMo 3 Think feels deliberate. The chain-of-thought traces are explicit — you can watch it reason through problems step by step. Response quality is solid, especially on math and coding where it occasionally beats models with 6x more training compute.

The 7B variant is genuinely usable on modest hardware. The 32B variant is where the magic is, but you’ll need a serious GPU setup or cloud inference.

What stands out is the vibe of the project: this is academic research done right, not a company hedging its open-source bets.

Verdict

Use it when:

You need to understand why a model does what it does — research, audits, education

Apache 2.0 matters — commercial use without restrictions

You’re doing RL research and need documented training pathways

You want Western/American provenance without Chinese model concerns

Skip it if:

You need absolute peak performance on broad knowledge tasks — Qwen 3 32B is still slightly ahead

You’re resource-constrained and need MoE efficiency — MiMo or Nemotron might serve you better

Community ecosystem matters more than openness — Llama has more tooling

Sovereignty Score

Maximum

This is the gold standard for open AI. Full training data, full code, full checkpoints, Apache 2.0 license. You can literally reproduce the model from scratch. No other frontier-class model comes close to this level of openness.

If sovereignty is your priority, OLMo 3 is the answer.

Model: OLMo 3 (7B, 32B variants)

Type: LLM

Size: 7B / 32B dense (no MoE)

VRAM: 7B ~8GB quantized | 32B ~20GB Q4, ~64GB FP16

Run it: Ollama, HuggingFace, vLLM, LM Studio

Links

Model Surface is part of Loopcraft — true individual power in the age of AI.