SAM 3

Model reviews for builders covering open/local and frontier models — with honest takes on what actually works and sovereignty vs capability tradeoffs.

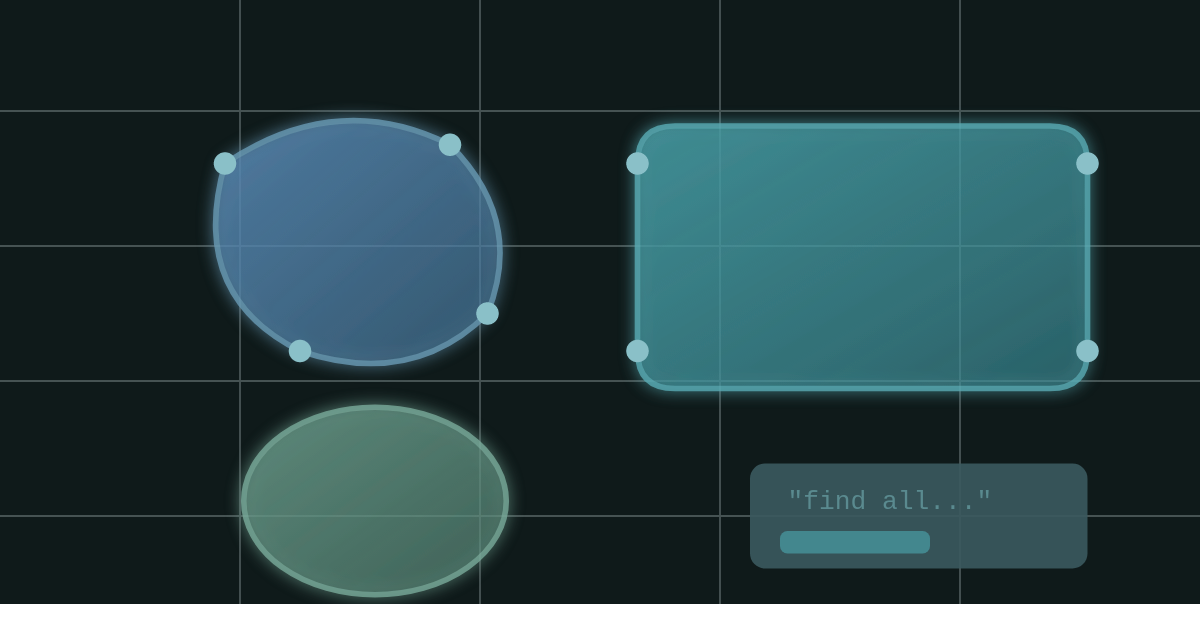

SAM 3 is Meta’s third-generation segmentation model — and the first in the series that understands language. Previous SAM models needed you to click, draw boxes, or provide masks. SAM 3 accepts text prompts like “the striped red umbrella” and finds every instance in the image or video. You describe what you want to see in a video, SAM 3 finds it, segments it, and tracks it through time.

The training data is staggering — a custom data engine annotated over 4 million unique concepts, 50x more than existing segmentation benchmarks. Meta claims 2x performance gain over prior systems on their new SA-CO benchmark.

Released November 2025 under a custom SAM License. Commercial use allowed, but read the terms — it’s not Apache 2.0.

Details

Strengths:

Language-native segmentation — Text prompts open up use cases that visual prompts can’t touch. “Find every person wearing a red hat” just works.

Massive concept coverage — 4M+ concepts in training means it generalizes to things other models haven’t seen. Wildlife, underwater imagery, edge cases.

Video tracking included — SAM 2’s memory architecture carries over. Detect once, track continuously.

Fast inference — 30ms per image with 100+ objects on H200. Near real-time for video with ~5 concurrent objects.

Weaknesses:

Server-scale model — 848M parameters puts this firmly in “needs a real GPU” territory. Not running on your laptop.

Custom license — SAM License allows commercial use but with restrictions. Patent lawsuit provisions, California jurisdiction, Meta can change terms. Not the clean Apache 2.0 you’d want.

Struggles with complex descriptions — “The second book from the right on the top shelf” breaks without multimodal LLM integration. Open-vocabulary doesn’t mean infinite vocabulary.

Fine-tuning required for niche domains — Zero-shot generalizes well, but fine-grained out-of-domain concepts need adaptation.

The feel:

SAM 3 feels like the segmentation model that should have existed years ago. Text-to-mask is natural — you think in concepts, not coordinates. The unified architecture means you’re not juggling separate detection and tracking pipelines.

The 16GB VRAM requirement is reasonable for the capability. This isn’t a model you’ll run on edge devices, but any modern workstation or cloud GPU handles it fine. Inference speed is impressive — you can integrate this into real-time workflows without painful latency.

What stands out is the breadth. Most segmentation models specialize. SAM 3 handles people, products, wildlife, underwater scenes, furniture — the 4M concept training shows.

Verdict

Use it when:

You need text-promptable segmentation — “find all X” workflows

Video tracking matters — detect once, track continuously

Your domain is covered by broad training — most common objects and scenes

You have GPU resources — 16GB VRAM, not edge deployment

Skip it if:

You need edge deployment — 848M parameters won’t fit on mobile or embedded

Apache 2.0 license is a hard requirement — SAM License has restrictions

Your domain is highly specialized — may need fine-tuning for niche concepts

You only need basic instance segmentation — SAM 2 is lighter and may suffice

Sovereignty Score

Medium

Open weights, open code, commercial use allowed — but the custom SAM License isn’t true open source. Meta retains control over license terms and can modify them. Patent provisions add legal complexity. You can run this on your own hardware, but you’re operating under Meta’s terms, not community-owned infrastructure.

For segmentation specifically, there aren’t many fully open alternatives at this capability level. SAM 3 is the practical choice, but know you’re trading some sovereignty for capability.

Model: Segment Anything Model 3 (SAM 3)

Type: Vision / Segmentation

Size: 848M parameters (~3.4 GB)

VRAM: ~16GB (fits comfortably, 30ms inference on H200)

Run it: PyTorch, HuggingFace, facebookresearch/sam3

Links

Sovereign Model Series is part of Loopcraft — true individual power in the age of AI.